Learning tools

-

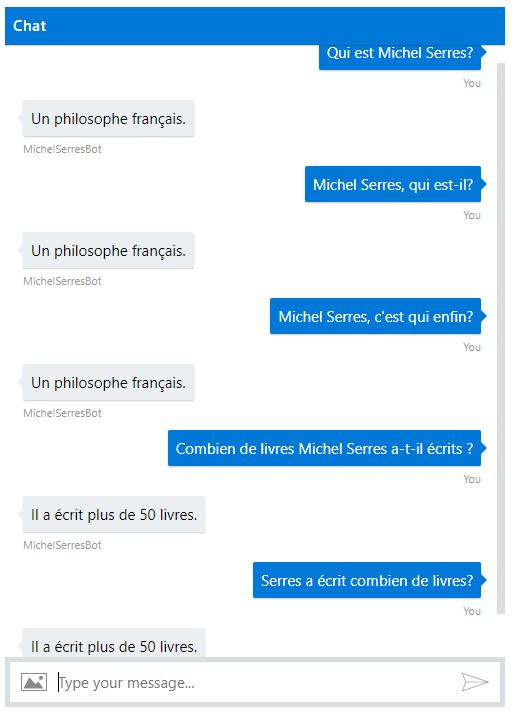

My first experience of creating a chatbot, and how bots might be used in teaching foreign languages

In preparation for the next academic year (which in Australia will begin in February) I am exploring new ways of assessing students in foreign language units, especially at first year level. Last Friday I created a chatbot using Microsoft’s QnA Maker and the Azure cloud computing platform. You can have a play with it the

-

New Accumulator Test for Excel-based Vocabulary Learning Tool

I have added a new “Accumulator” test to Vocab Book, the Excel-based vocabulary learning tool I wrote last year. The new test simulates a tried and tested learning method: Imagine a vocab book in two columns with English words or phrases on the left and their target language translations on the right. At school I used

-

Suite of Excel-based study aids to download: Vocab Book, Revision Aid and Memorise It

I have now finished writing the suite of three Excel-based learning tools I’ve been working on for the past few weeks, and on this page I want to bring them together, summarise what they can do, and offer all the download links in one place. Vocab Book Vocab Book is a powerful, fully-featured vocabulary

-

First Vocab Book testimonials

Many thanks to those who have been using and testing Vocab Book. I have ironed out a couple of minor bugs. Thanks too to those who have sent through encouraging words about the workbook. Here are two of the first testimonials: I love the Excel vocab book […] I have sent the link to my Mum,

-

Free new revision aid software – helps you learn dates, facts, formulae, equations and anything you can express as text

As a complement to Vocab Book and Memorise It, I have written a third Excel-based study tool, called Revision Aid. Here is the blurb: Revision Aid is a free excel-based workbook to help you revise for tests and exams. You can use it for testing yourself on anything that can be expressed in a question

-

Free new Excel-based memorisation aid and self-tester

As a spin-off from Vocab Book I have written a memorisation aid called Memorise It. Here is the blurb: Memorise It is a free Excel-based memory tool that helps you to remember facts, poetry, lines for a play, or any other text you need to commit to memory. Test yourself on your memory texts in

-

Powerful and fully-featured Excel-based vocabulary learning tool

I have written an Excel workbook to help university students, school pupils and the rest of us to organise, learn and test knowledge of vocabulary and phrases in sixteen languages. It sits alongside its sister workbooks Memorise It and Revision Aid (for more information about the suite of workbooks, see here). Here is the blurb: