Michel Serres

-

AUDIO TALK: Michel Serres and the Parasitic Unmaking of Modernity

This is the audio of a talk I gave at the International Philosophical Seminar (IPS) in June 2022. It begins by showing how Michel Serres rethinks the foundational modern moment of the state of nature, and it then sketches a way of understanding modernity in terms of three recurring moments: a flattening, a division and

-

Podcast: “Renewing our Mental Models With Michel Serres”

In June 2022 I had the privilege of giving a keynote address for the NaturArchy: Towards a Natural Contract conference at the European Commission’s Joint Research Centre, with the title “Renewing our Mental Models with Michel Serres”. The talk is now available as a podcast below. Abstract: As our understanding of the world changes over

-

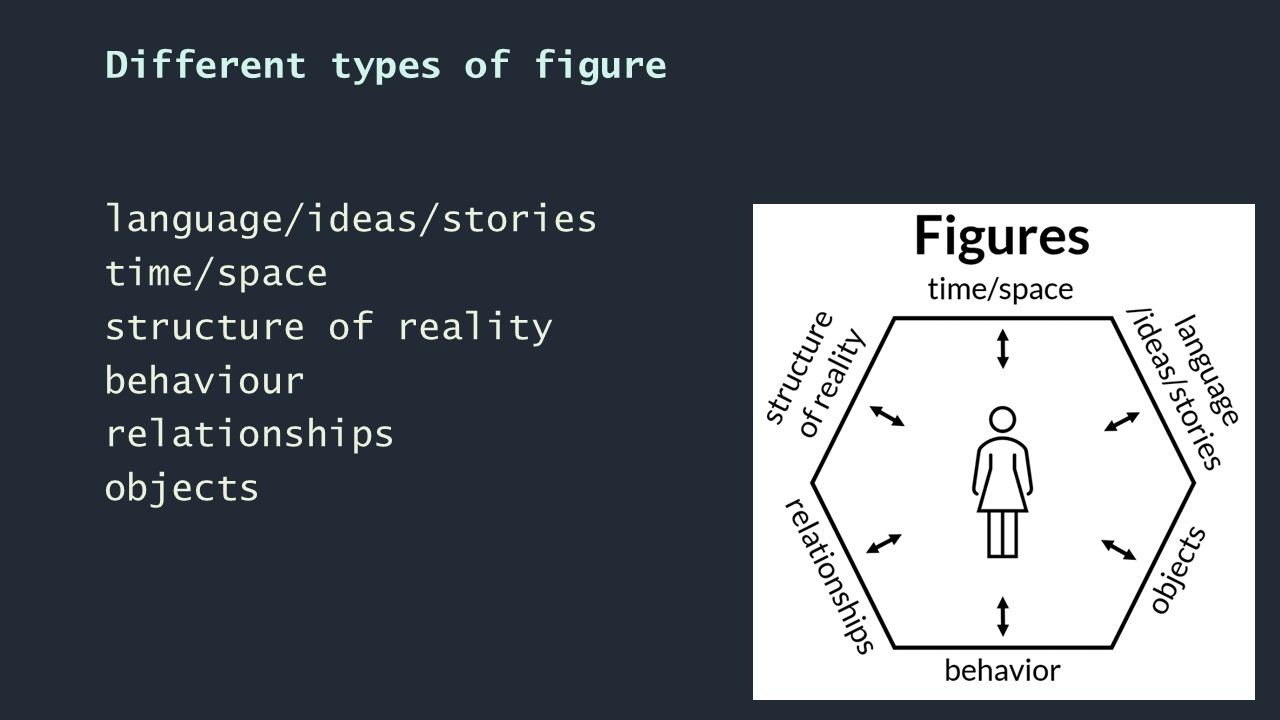

VIDEO: Towards a General Theory of Figures

This is a video of a paper I gave to the The Research Unit of Architecture Theory and Philosophy of Technics, part of the Institute for Architectural Sciences in the Department for Architecture Theory and Philosophy of Technics ATTP at Vienna University of Technology, at the invitation of Prof. Vera Bühlmann. In the talk I bring

-

SPEP 2020 video and paper: Remembering and Thinking with Michel Serres

This September I had the privilege of taking part in a panel at SPEP 2020 (postponed until 2021) alongside Marjolein Oele and Brian Treanor. “Remembering and Thinking with Michel Serres” ranged over issues related to Serres’s contemporaneity, his natural contract idea, and the distinctiveness of his thought. Here is a recording of Brian’s paper and

-

Interview: Michel Serres, Philosophy and the Contemporary World

This week I was interviewed by David Webb for the 2020 SEP-FEP (Society of European Philosophy and Forum for European Philosophy) Conference coming up in November. The interview focused on the work of French philosopher Michel Serres (1930-2019), ranging over Serres’s style, politics, ecology, language, and my book Michel Serres: Figures of Thought (Edinburgh University Press,

-

Plato’s cave, Michel Serres, and imagining Nietzsche’s madman happy

I’ve been teaching Nietzsche’s madman this week in the context of a unit on literary modernism, and there has been some fascinating discussion among the students about the solar imagery in the passage. As a contribution to that discussion, here is an extract from Michel Serres: Figures of Thought in which I compare the image of

-

So you want to read Michel Serres? Start here

I recently received an email from someone wanting to get into Michel Serres’s writing in English translation, and asking where to start. Here are some thoughts, to which I hope to add over time. The suggestions of primary and secondary material below are not meant tobe exhaustive, but to provide a jumping off point for

-

Video of my Stanford talk. Michel Serres: Thinking in Figures

The audio and slides below were recorded at my talk at Stanford University on January 30, 2020. I had the pleasure of speaking about the late Michel Serres to an audience most of whom had known him personally, some over many years. I presented the approach of Michel Serres: Figures of Thought and suggested why Serres’s

-

Michel Serres book excerpt: The end of the Neolithic

This is the seventh in a series of extracts from Michel Serres: Figures of Thought that I will be posting in the run-up to the book’s publication around April 2020. The archive of all the extracts will be accessible here. The following exceprt is from Chapter Six of Michel Serres: Figures of Thought, entitled ‘Ecology’

-

Michel Serres book excerpt: Pragmatogony, prehistory and the cadaverous object

This is the sixth in a series of extracts from Michel Serres: Figures of Thought that I will be posting in the run-up to the book’s publication around April 2020. The archive of all the extracts will be accessible here. The following exceprt is from Chapter Five of Michel Serres: Figures of Thought, entitled ‘Objects’

-

Michel Serres book excerpt: The origin of language

This is the fifth in a series of extracts from Michel Serres: Figures of Thought that I will be posting in the run-up to the book’s publication around April 2020. The archive of all the extracts will be accessible here. The following exceprt is from Chapter Four of Michel Serres: Figures of Thought, entitled ‘Language’

-

Michel Serres book excerpt: Percolation and multiple temporalities

This is the third in a series of extracts from Michel Serres: Figures of Thought that I will be posting in the run-up to the book’s publication around April 2020. The archive of all the extracts will be accessible here. The following exceprt is from Chapter Two of Michel Serres: Figures of Thought, entitled ‘Space

-

Michel Serres book excerpt: Let’s talk about Serres’ style

This is the fourth in a series of extracts from Michel Serres: Figures of Thought that I will be posting in the run-up to the book’s publication around April 2020. The archive of all the extracts will be accessible here. The following exceprt is from Chapter Three of Michel Serres: Figures of Thought, entitled ‘Serres’

-

Michel Serres book excerpt: Serres’ algorithmic universal

This is the second in a series of extracts from Michel Serres: Figures of Thought that I will be posting in the run-up to the book’s publication around April 2020. The archive of all the extracts will be accessible here. Serres’ algorithmic universal In addition to the sharp contrast between Cartesian analysis and Leibnizian combination,

-

Representing French and Francophone Studies with Michel Serres

I am delighted that my article “Representing French and Francophone Studies with Michel Serres” has just been published in the latest number of the Australian Journal of French Studies. Many thanks to Ash, Leslie and Gemma at the ANU who worked hard on the editing and wrote a splendid introduction to the AJFS special edition.

-

Michel Serres book excerpt: Serres, Leibniz, and umbilical thinking

This is the first of a number of extracts from Michel Serres: Figures of Thought that I will be posting in the run-up to the book’s publication around April 2020. The archive of all the extracts will be accessible here. Serres and Leibniz Weighing in at 800 pages and around 300 000 words, Le Système

-

Why Michel Serres is, and is not, an ecological thinker

In this excerpt from Michel Serres: Figures of Thought I address the question of whether Serres should be considered an “ecological” thinker.

-

Kant, Foucault and Serres on the a priori

Michel Serres’ “objective transcendental” naturalises the a priori, taking a different path both to Kant in the Critique of Pure Reason and to Foucault’s “historical a priori”

-

Why Michel Serres? A Personal Reflection

On the day of Michel Serres’s death, I reflect on what drew me to write on this beguiling, prescient, inimitable thinker

-

Michel Serres and film 4: Jean-Luc Godard’s Alphaville

This the fourth of four undergraduate lectures in which I explore how the thought of Michel Serres can inform film studies. I embarked upon the lectures as a speculative experiment, but in writing them I became convinced that there are rich resources in Serres’s thought for generating novel and engaging readings of films that often

-

Michel Serres and film 3: Krzysztof Kieślowski’s Trois Couleurs Bleu

[vc_row row_height_percent=”0″ overlay_alpha=”50″ gutter_size=”3″ column_width_percent=”100″ shift_y=”0″ z_index=”0″ shape_dividers=””][vc_column][vc_column_text] This the third of four undergraduate lectures in which I explore how the thought of Michel Serres can inform film studies. I embarked upon the lectures as a speculative experiment, but in writing them I became convinced that there are rich resources in Serres’s thought for generating

-

Michel Serres and film 2: Agnès Varda’s Sans toit ni loi

This the second of four undergraduate lectures in which I explore how the thought of Michel Serres can inform film studies. I embarked upon the lectures as a speculative experiment, but in writing them I became convinced that there are rich resources in Serres’s thought for generating novel and engaging readings of films that often

-

Michel Serres and film 1: Agnès Varda’s Cléo de 5 à 7

This the first of four undergraduate lectures in which I explore how the thought of Michel Serres can inform film studies. I embarked upon the lectures as a speculative experiment, but in writing them I became convinced that there are rich resources in Serres’s thought for generating novel and engaging readings of films that often

-

Michel Serres book project update: Draft Introduction

For the past three and a half years I have been working on a monograph on the thought of Michel Serres. It has been an exhilarating and exhausting project, in the course of which I have largely forgotten what it feels like to be anywhere near an intellectual comfort zone. During these years I have

-

French Philosophy Today paperback now shipping

I just received my copy of French Philosophy Today in paperback. You can find it on Amazon here. Alain Badiou, Quentin Meillassoux, Catherine Malabou, Michel Serres and Bruno Latour: this comparative, critical analysis shows the promises and perils of new French philosophy’s reformulation of the idea of the human. See here for chapter summaries.

-

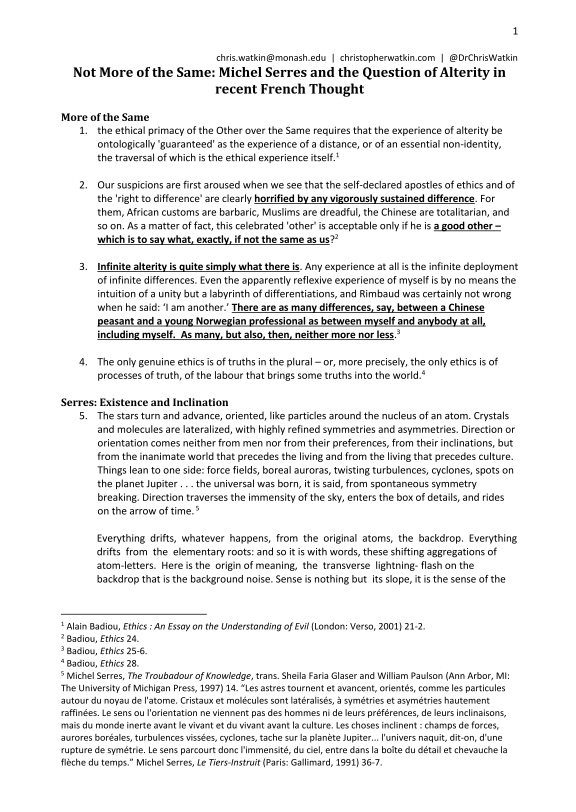

YouTube video of my paper on Michel Serres and the Question of Alterity in Recent French Thought, with improved audio

I have now tidied up the video from yesterday’s seminar on “Michel Serres and the Question of Alterity in Recent French Thought” and improved the quality of the audio. Here is the new YouTube version:

-

Download the handout for my live-streamed paper on Serres and alterity this coming Tuesday

If you are planning to follow my live-streamed paper on Michel Serres and alterity on Periscope this coming Tuesday, you might want to download the handout that will be distributed to seminar participants. Here it is: The handout contains fourteen quotations and two diagrams to which I will refer in the course of the paper. During

-

I’m planning to tweet live video of my research seminar on Michel Serres and the Question of Alterity next Tuesday

Next Tuesday I will be giving a seminar at Deakin Univesity, Melbourne, on Michel Serres’s understanding of alterity. The paper comes from the first chapter of my book on Michel Serres, on which I have been able to do some more work recently. I’m trying to get permission from Deakin to tweet a live video

-

French Philosophy Today paperback now on Amazon pre-order

I am delighted to announce that the paperback edition of French Philosophy Today is now (finally!) available for pre-order on Amazon. The U.S. site has it at $39.95 and most European sites set the price at around €25. Curiously, amazon.co.uk has the paperback at £150, which I assume is a mistake soon to be corrected. Here is

-

Michel Serres: From Restricted to General Ecology

My project to write a critical introduction to the thought of Michel Serres continues to advance, and one small piece of the extensive Serresian jig-saw puzzle is of course the distinctive way in which he approaches ecological questions. A couple of years ago I was delighted to be approached by Daniel Finch-Race and Stephanie Posthumus to

-

French Philosophy Today to join Difficult Atheism on Edinburgh Scholarship Online

I’ve just learned that French Philosophy Today will shortly join Difficult Atheism on Edinburgh Scholarship Online. This, I hope, will come as good news to at least some of those who have been in touch with me about the price of the hardback edition.

-

French Philosophy Today reviewed at NDPR

French Philosophy Today has just been reviewed over at Notre Dame Philosophical Reviews. Here are some highlights: Gilles Deleuze and Félix Guattari’s famously defined philosophical production as concept creation. If they are correct, then Watkin’s work is not just a scholarly commentary of philosophy but also itself an inventive philosophical work. If Alain Badiou, the

-

French Philosophy Today: Summary of Chapter 5 – Michel Serres

This is the fifth in a series of posts providing short summaries of the chapters in my latest book, French Philosophy Today: New Figures of the Human in Badiou, Meillassoux, Malabou, Serres and Latour. For further chapter summaries, please see here. With Michel Serres’s universal humanism (Chapter 5) the argument returns to the question of host capacities in

-

In this Sunday’s Age and Sydney Morning Herald I’m quoted talking about robots, consciousness and Descartes

With the publication of my book on contemporary limits and transformations of humanity coming out next month I had the chance this week to talk with John Elder of The Sunday Age about the future possibility of rights for robots. John’s article came out today in The Sunday Age and the Sydney Morning Herald, with the title “What happens

-

Michel Serres’s The Parasite: A Reader’s Guide. 148 page document to download

Over the past few weeks I have been working my way through Michel Serres’s 1980 Le Parasite: a dense, poetic, brilliant text that seeks to tear down and rebuild the way we think about everything. In reading the book I kept a running list of intertexts to which Serres refers, a document that runs to some

-

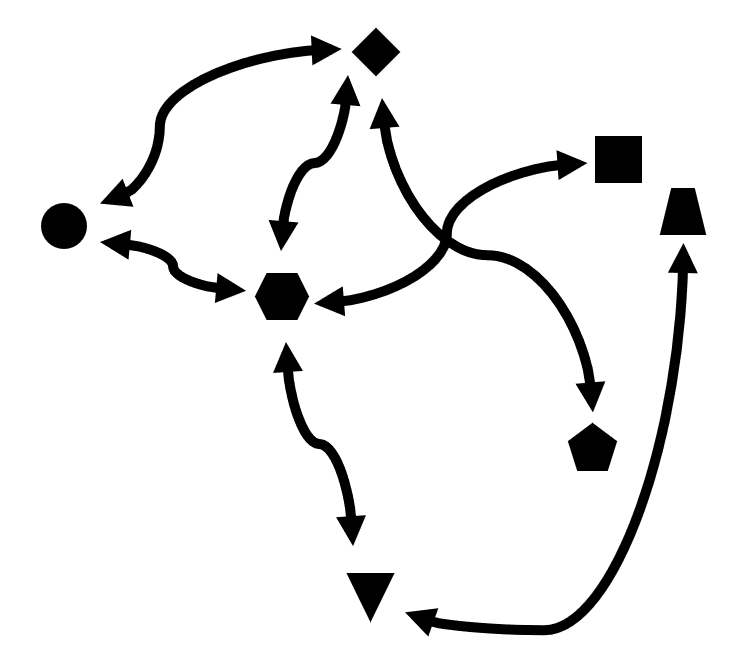

A diagrammatic snapshot of French philosophy from Magazine Littéraire, September 1977

Today I was given a copy of this edition of the Magazine Littéraire from September 1977 (thank you Philip). Its centrefold is a diagram seeking to represent flows of influence between contemporary philosophers. The table provides a fascinating snapshot… Marx is top and centre, flanked by Freud and Nietzsche. Influences are split between the two poles of

-

French Philosophy Today: New figures of the Human, low-res cover

Today I received the first low resolution mock-up of the cover for my new book: French Philosophy Today. New Figures of the Human in Badiou, Meillassoux, Malabou, Serres and Latour. Many thanks to Rebecca Mackenzie and Julien Palast for your wonderful work.

-

My article on Michel Serres, Biosemiotics and the “Great Story” of the Universe published in SubStance

My article “Michel Serres’ Great Story: From Biosemiotics to Econarratology” has just been published in SubStance. It is available from institutions with a subscription to Project Muse here. Abstract: In four key but as yet untranslated texts from 2001-2009, Michel Serres builds on his earlier biosemiotics with an econarratology he calls the ‘Great Story’ (Grand

-

Rethinking alterity and logocentrism after phenomenology with Serres’s L’Hermaphrodite: Sarrasine sculpteur (1987)

This post is part of the series of draft entries for a Michel Serres dictionary. Abbreviations: Conv: Serres and Latour, Conversations on Culture, Science and Time TI: Serres, Le Tiers instruit One of Serres’s three book-length engagements with literary authors, l’Hermaphrodite was written significantly later than Jouvences (1974, on Jules Verne) and Feux et signaux

-

Draft entry for a Michel Serres Dictionary: Le Système de Leibniz et ses modèles mathématiques (1968)

Le Système de Leibniz was published during the heady anni mirabiles of late 1960s French thought. It appeared in 1968, the same year as Roland Barthes’s short essay ‘The Death of the Author’, one year after Derrida’s Of Grammatology and Deleuze’s Difference and Repetition, and two years after Foucault’s The Order of Things. Like Derrida’s and

-

Michel Serres app now available on Google Play

I’ve written a little app to aggregate information from around the web (news, Twitter, Youtube, Google Scholar, Google Trends…) on Michel Serres. It’s nothing flash but it allows me quickly to scan various sources to see if there’s anything new on Serres, without having manually to visit plural URLs. It is free to download from

-

Michel Serres Today

Abstract. This is an expanded version of a paper originally given at the English and Theatre Studies research seminar at Melbourne University in May 2015, and it retains its oral tone. My intention both for the original paper and for this expanded version is to provide a first introduction to the work and thought of

-

Translation from Michel Serres’s thesis on Leibniz: “we must enrich our models of thinking which are, generally speaking, lamentably poor”

At the moment I am working my way through Michel Serres’s monumental Le Système de Leibniz et ses modèles mathématiques. I am struck by how many of the ideas that have come to be thought of as characteristically Serresian are already present implicitly or (more often than not) explicitly in this 800 page doctoral thesis. If it

-

Michel Serres Today: new paper uploaded to academia.edu

I have just uploaded a paper on Michel Serres to academia.edu. Here is the abstract: This is an expanded version of a paper originally given at the English and Theatre Studies research seminar at Melbourne University in May 2015, and it retains its oral tone. My intention both for the original paper and for this

-

Michel Serres: “There are two kinds of philosopher: there are philosophers who shackle you and philosophers who free you”

Peter Hallward: Heidegger’s works on language, and on being-with, did they have any value for you? Michel Serres: I was so busy with sciences and techniques that it was very difficult to throw myself into an author who refused them wholesale. There are two kinds of philosopher: there are philosophers who shackle you and philosophers

-

Consolidated list of all the Michel Serres primary bibliography posts

All the categories for the comprehensive Michel Serres primary bibliography are now up and running. I will keep adding titles over the coming months as I come across them, but in order to make the bibliography easier to navigate I have gathered below links to all the sections, as well as to the entire bibliography.